Think about the last time you used a digital scale. You probably trusted the number on the screen without a second thought. But in a high-stakes manufacturing plant, that "trust" can be a liability. If a medical device is off by a fraction of a millimeter or a chemical dose is slightly wrong, the result isn't just a defective product-it's a potential patient safety crisis. That is why equipment calibration and validation aren't just boxes to check for an audit; they are the primary defense against catastrophic failure.

The Core Difference: Calibration vs. Validation

People often use these terms interchangeably, but they do completely different jobs. If you confuse the two, you'll likely fail your next quality audit.

Calibration is the process of comparing a measurement device against a known, traceable standard to see how accurate it is. It's basically a "health check" for your tools. You're asking: "Is this device telling me the truth?"

Validation, on the other hand, is the evidence that a piece of equipment consistently does what it's supposed to do for a specific process. Following GAMP 5 guidelines, validation asks: "Does this machine actually work for the job I'm using it for?"

| Feature | Calibration | Validation |

|---|---|---|

| Primary Goal | Accuracy and Traceability | Consistency and Fitness for Purpose |

| Key Question | Is the reading correct? | Does the process work? |

| Typical Frequency | Periodic (e.g., every 6-12 months) | Once at install, then periodically or after major changes |

| Reference Point | NIST or SI Units | User Requirement Specifications (URS) |

Calibration Requirements and Traceability

You can't just say a tool is calibrated because a technician looked at it. To be compliant with ISO 13485:2016 or ISO 9001:2015, you need an unbroken chain of traceability. This means your device is compared to a standard, which was compared to a higher standard, all the way up to the International System of Units (SI).

If you're in medical device manufacturing, the rules are strict. You need unique IDs for every piece of gear and documented uncertainty calculations. A good rule of thumb is the Test Uncertainty Ratio (TUR); your measurement uncertainty should be at least four times smaller than the tolerance you are measuring (TUR ≥ 4:1). If you ignore this, you risk "false acceptance," where you think a part is good when it's actually out of spec.

Environmental factors are the silent killers of calibration. According to NIST, nearly 58% of out-of-tolerance incidents happen because of temperature swings greater than ±5°C. If your lab is a sauna in the summer and a freezer in the winter, your fancy micrometers are lying to you. Most standards suggest keeping your environment around 20°C ±2°C with 40% relative humidity.

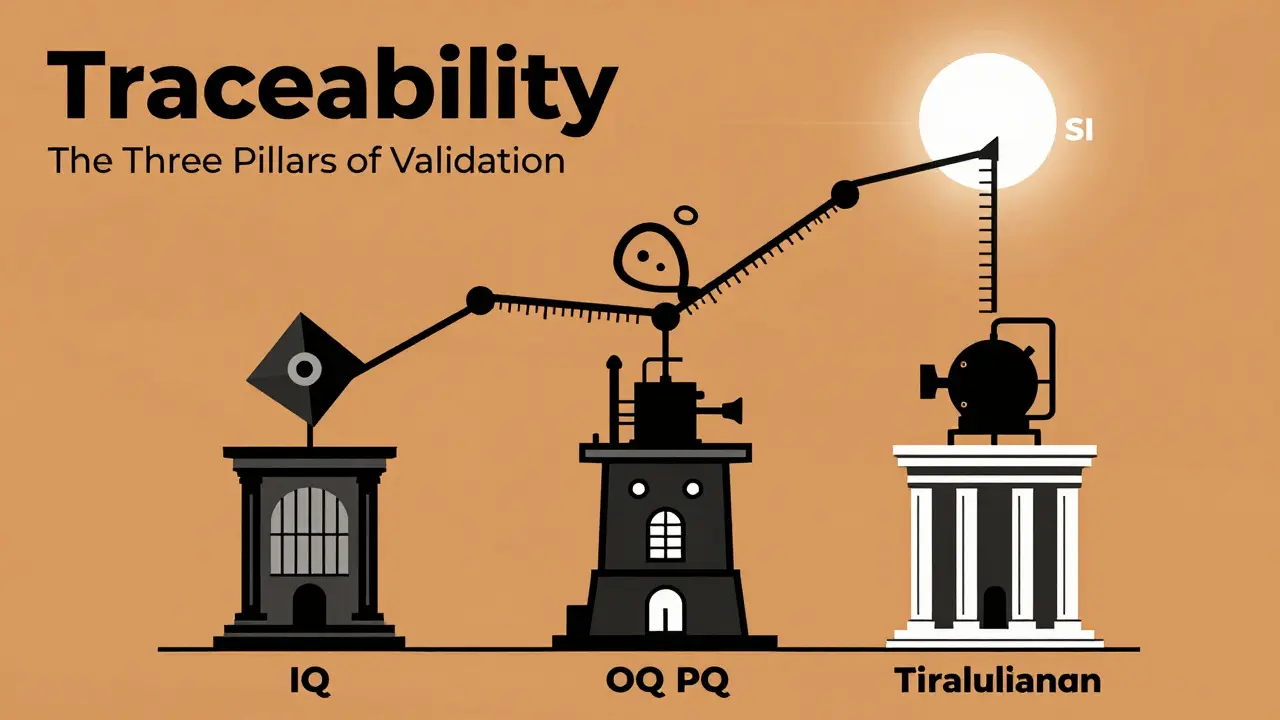

The Three Pillars of Validation (IQ, OQ, PQ)

Validation is a journey, not a single event. For complex production systems, this process can take 18 to 24 months and cost hundreds of thousands of dollars. It follows a specific three-step sequence:

- Installation Qualification (IQ): This is the "Did we plug it in right?" phase. You verify that the equipment was delivered as ordered and installed according to the manufacturer's specs.

- Operational Qualification (OQ): This is the "Does it turn on and do the basics?" phase. You test the equipment's limits to ensure it operates correctly across its entire intended range.

- Performance Qualification (PQ): This is the "Does it actually make a good product?" phase. You run the equipment under real-world conditions over time to prove it consistently produces a quality result.

Setting Your Calibration Intervals

Should you calibrate every month? Every year? Many companies blindly follow the manufacturer's manual, but that's often a waste of money. A more intelligent approach is the risk-based method (like Method 5 from SAE AS9100D).

Take a look at your historical data. If you've calibrated a scale every three months for two years and it's never drifted, why keep doing it? Some biomedical engineers have successfully extended intervals from quarterly to biannually based on stability data, saving thousands in labor and service costs. However, if you're using something like a nuclear density gauge in highway construction, you can't wait a year-radiation sources decay, requiring quarterly verification.

For those in clinical labs, the Clinical Laboratory Improvement Amendments (CLIA) set the pace. High-complexity tests usually need verification every six months, while basic "waived" tests might not need routine calibration at all, provided you follow the manufacturer's verification protocols.

Common Pitfalls and Digital Transformation

The biggest headache for quality managers is the "documentation burden." Small manufacturers often spend over 15 hours a week just managing paper records. This is where the industry is shifting. The FDA's 2024 Calibration Modernization Initiative is pushing for electronic records for Class II and III devices by the end of 2026.

Moving to cloud-based calibration management software can reduce audit prep time by over 60%. Instead of digging through filing cabinets, you can generate a certificate in seconds. But be careful-automated systems can sometimes create "traceability gaps" if they don't properly document the chain-of-custody for the reference standards used.

Another common mistake is neglecting the AI side of things. With the March 2024 amendment to ISO 13485, if you use AI or Machine Learning for measurements, you can't just calibrate it once. You need "continuous validation protocols" to monitor algorithm drift, as AI can "learn" its way into becoming inaccurate over time.

What happens if my equipment is found to be out of calibration?

You can't just fix the tool and move on. You must perform a "impact assessment." This means looking back at every product measured with that tool since the last successful calibration. If the drift was significant, you may need to recall products or re-test batches to ensure they actually meet specifications.

Is NIST traceability the only standard?

No, but it's the most common in the US. European manufacturers often require traceability to BIPM (International Bureau of Weights and Measures) standards. Companies operating in both the US and EU often have to maintain dual systems to satisfy both FDA and EU MDR 2017/745 requirements.

How often should I perform validation?

Full validation (IQ/OQ/PQ) is typically done once during installation. However, you must perform "re-validation" whenever there is a significant change. This includes moving the equipment to a new location, updating the software version, or modifying the critical components of the machine.

Can I use a third-party lab for calibration?

Yes, but you must ensure the lab is accredited (e.g., ISO/IEC 17025). You need to verify that their standards are traceable to SI units and that they provide a calibration certificate stating the actual measurement uncertainty, not just a "pass/fail" stamp.

What is the difference between calibration verification and full calibration?

Calibration verification is a quick check-like using a known weight to see if a scale is roughly correct-to ensure the tool is still fit for use. Full calibration is a comprehensive process that involves multiple measurement points and adjustments to align the tool perfectly with a certified standard.

Next Steps for Your Quality Program

If you're just starting or trying to clean up a messy system, start with an equipment inventory. List every single device that affects product quality. Classify them by risk: high-precision tools get tighter intervals, while basic thermometers get more breathing room.

Next, audit your environment. If your floor is fluctuating by 10 degrees, spend your budget on an environmental chamber before buying a more expensive sensor. Finally, move away from paper. Whether it's a dedicated tool like GageList or a custom ERP module, digital records are the only way to survive a modern regulatory audit without losing your mind.

6 Comments

Goodwin Colangelo- 4 April 2026

The bit about TUR is spot on. Most people just glance at the cert and ignore the uncertainty, but that's how you end up with a massive batch of scrap. If you're running tight tolerances, you really gotta push for that 4:1 ratio or you're basically gambling with your quality.

Divine Manna- 4 April 2026

The distinction between calibration and validation is an ontological necessity in any rigorous quality system. One addresses the intrinsic veracity of the instrument, while the other examines the systemic efficacy of the process. It is a common failure of the mediocre mind to conflate the two, yet such confusion is precisely what leads to the industrial entropy we see in lower-tier manufacturing plants.

Will Baker- 5 April 2026

Sure, let's just trust the cloud. Because nothing says "secure and compliant" like putting your entire traceability chain on a server owned by some giant corporation that could vanish or have a glitch tomorrow. I'm sure the FDA will love that during an audit.

Joey Petelle- 6 April 2026

Imagine thinking a cloud-based system is a "modernization" and not just a way for some Silicon Valley hack to charge you a monthly subscription for a digital filing cabinet. Truly an American masterpiece of inefficiency. We've replaced actual engineering rigor with a fancy UI and called it progress. Pure poetry.

Brian Shiroma- 6 April 2026

Oh wow, a 24-month validation cycle. Because spending two years and hundreds of thousands of dollars to prove a machine actually works is exactly how we keep the economy booming. Absolutely thrilling stuff.

Aysha Hind- 8 April 2026

The "modernization initiative" smells like a total setup to let the government track every single micro-adjustment in real-time. Why do they suddenly care about electronic records in 2026? It's a digital leash, plain and simple. They want a back door into every lab's data so they can flag "anomalies" that aren't even anomalies, just the system breathing. This whole push is a chaotic mess designed to strip away the last bit of autonomy small shops have left. Total garbage.